Update (May 2025): Since the initial deployment of a local instance (advertised as a beta, and basically running a stock configuration), Jitsi upstream has seen lots of development and Debamax hasn’t been able to spend enough time to keep the service running optimally. The instance has been shut down accordingly.

Introduction

Videoconferencing with the official meet.jit.si instance has always been a pleasure, but it seemed a good idea to research how to install a Jitsi instance locally, that could be used by customers, by members of the local Linux Users Group (COAGUL), or by anyone else.

Update (April 2020): Since the first publication of this article, the “Jitsi configuration” section has been updated to reflect changes upstream. A “More about STUN servers section has been added as well.

Networking vs. virtualization host

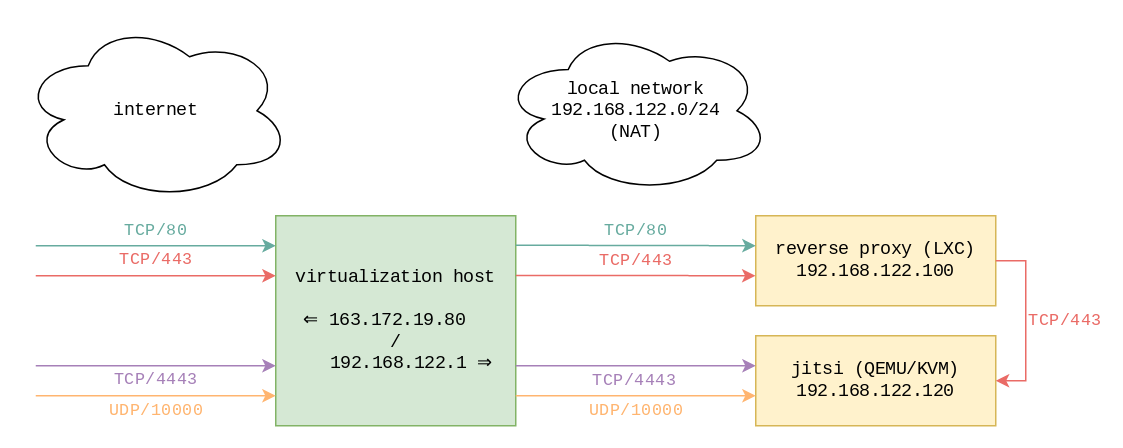

One host was already set up as a virtualization environment, featuring libvirt, managing LXC containers and QEMU/KVM virtual machines. In this article, we focus on IPv4 networking. Basically, the TCP/80 and TCP/443 TCP ports are exposed on the public IP, and NAT’d to one particular container, which acts as a reverse proxy. The running Apache server defines as many VirtualHosts as there are services, and acts as a reverse proxy for the appropriate LXC container or QEMU/KVM virtual machine.

Schematically, here’s what happens:

- Both TCP/80 and TCP/443 ports on

163.172.19.80(the public IP) are NAT’d to the same ports on192.168.122.100, the reverse proxy’s IP address on the default libvirt bridge (192.168.122.0/24). - Depending on the server name in the HTTP request, the

apache2service running in that container will proxy requests to the appropriate service in another container or virtual machine. This could be something likehttp://192.168.122.110:8080for a Jenkins service or something likehttps://192.168.122.120/for a Jitsi service. - As a general rule, the

apache2configuration only returns an HTTP redirection pointing at the same service over HTTPS, except for the.well-known/acme-challengepath, to make the certificate dance for Let’s Encrypt possible.

What does that mean for the Jitsi installation? Well, Jitsi expects those ports to be available:

- TCP/443

- TCP/4443

- UDP/10000

For this specific host, TCP/4443 and UDP/10000 were available, and have been NAT’d as well to the Jitsi virtual machine directly. Given the existing services, the same couldn’t be done for the TCP/443 port, which explains the need for the following section.

Note: A summary of the host’s iptables configuration is available in the annex at the bottom of this article.

Apache as a reverse proxy

A new VirtualHost was defined on the apache2 service running as reverse proxy. The important parts are quoted below:

<VirtualHost *:80>

ServerName jitsi.debamax.com

RedirectMatch permanent ^(?!/\.well-known/acme-challenge/).* https://jitsi.debamax.com/

</VirtualHost>

<VirtualHost *:443>

SSLProxyEngine on

SSLProxyVerify none

SSLProxyCheckPeerCN off

SSLProxyCheckPeerName off

SSLProxyCheckPeerExpire off

ProxyPass / https://192.168.122.120/

ProxyPassReverse / https://192.168.122.120/

</VirtualHost>

The redirections set up on the TCP/80 port were already mentioned in the previous section, so let’s concentrate on the TCP/443 port part.

The ProxyPass and ProxyPassReverse directives act on /, meaning every path will be proxied to the Jitsi virtual machine. If one wasn’t using VirtualHost directives to distinguish between services, one could be dedicating some specific paths (“subdirectories”) to Jitsi, and proxying only those to the Jitsi instance. But let’s concentrate on the simpler “the whole VirtualHost is proxied” case.

The first SSLProxyEngine on directive is needed for apache2 to be happy with proxying requests to a server using HTTPS, instead of plain HTTP.

All other SSLProxy* directives aren’t too nice as they disable all checks! Why do that, then? The answer is that Jitsi’s default installation is setting up an NGINX server with HTTP-to-HTTPS redirections, and it seemed easier to directly forward requests to the HTTPS port, disabling all checks since that NGINX server was installed with a self-signed certificate. One could deploy a suitable certificate there instead and enable the checks again, instead of using this “StackOverflow-style heavy hammer” (some directives might not even be needed).

Jitsi configuration

Jitsi itself was installed on a QEMU/KVM virtual machine, running a basic Debian 10 (buster) system, initially provisioned with 2 CPUs, 4 GB RAM, 3 GB virtual disk. Its IP address is 192.168.122.120, which is what was configured as the target of the ProxyPass* directives in the previous section.

The installation was done using the quick-install.md documentation, entering jitsi.debamax.com as the FQDN, and opting for a self-signed certificate (letting the reverse proxy in charge of the Let’s Encrypt certificate dance, like it does for all VirtualHosts).

Update (April 2020): Since late March 2020, upstream switched from videobridge to videobridge2. Another important change is that the jitsi-meet-turnserver package is pulled through jitsi-meet’s Recommends, as can be seen in APT metadata (wrapped for readability):

# apt-cache show jitsi-meet=1.0.4335-1 Depends: jitsi-videobridge2 (= 2.1-157-g389b69ff-1), jicofo (= 1.0-539-1), jitsi-meet-web (= 1.0.3928-1), jitsi-meet-web-config (= 1.0.3928-1), jitsi-meet-prosody (= 1.0.3928-1) Recommends: jitsi-meet-turnserver (= 1.0.3928-1) | apache2

TURN servers make it possible for clients to exchange streams in a peer to peer fashion when there are only two of them, by finding a way to traverse NATs. In the setup being documented here, the easiest is to not install the jitsi-meet-turnserver package (as documented recently in quick-install.md).

Now, a very important point needs to be addressed (no pun intended), which isn’t so much related to the fact one is running behind a reverse proxy, but related to the fact TCP/4443 and UDP/10000 ports are NAT’d: the videobridge component needs to know about that, and needs to know about the public IP and the local IP. In this context, the local IP is the Jitsi virtual machine’s local IP, where the NAT for TCP/4443 and UDP/10000 points to, and it is not the reverse proxy’s local IP. That’s why those lines have to be added to the /etc/jitsi/videobridge/sip-communicator.properties configuration file:

org.ice4j.ice.harvest.NAT_HARVESTER_LOCAL_ADDRESS=192.168.122.120 org.ice4j.ice.harvest.NAT_HARVESTER_PUBLIC_ADDRESS=163.172.19.80

[ Hint: Beware, there’s another sip-communicator.properties configuration file, for the jicofo component! ]

Additionally, a default setting needs to be commented out (in the same file), because the TURN server isn’t installed:

#org.ice4j.ice.harvest.STUN_MAPPING_HARVESTER_ADDRESSES=meet-jit-si-turnrelay.jitsi.net:443

Remember to restart the service:

systemctl restart jitsi-videobridge2

Update (April 2020): Until late March 2020, this systemd server unit used to be called jitsi-videobridge instead.

More about STUN servers

A privacy-concerned user was kind enough to inform a number of Jitsi instance administrators (including us) that the default Jitsi configuration uses Google’s STUN servers. This was fixed through a recent pull request: config: use Jitsi’s STUN servers by default, instead of Google’s.

Without waiting for a new upstream release, administrators can tweak their local configuration (in /etc/jitsi/meet/F.Q.D.N-config.js). This can be checked client-side by running tcpdump and checking packets are seen when a 2-participant conversation is set up:

tcpdump host meet-jit-si-turnrelay.jitsi.net

For completeness: Jitsi’s own infrastructure relies on Amazon Web Services at the moment.

Annex: host networking configuration

The relevant iptables rules on the host are the following (leaving aside the usual MASQUERADING which is required when using NAT):

Chain FORWARD (filter table) target prot opt source destination ACCEPT tcp -- 0.0.0.0/0 192.168.122.100 tcp dpt:80 ACCEPT tcp -- 0.0.0.0/0 192.168.122.100 tcp dpt:443 ACCEPT tcp -- 0.0.0.0/0 192.168.122.120 tcp dpt:4443 ACCEPT udp -- 0.0.0.0/0 192.168.122.120 udp dpt:10000 Chain PREROUTING (nat table) target prot opt source destination DNAT tcp -- 0.0.0.0/0 163.172.19.80 tcp dpt:80 to:192.168.122.100:80 DNAT tcp -- 0.0.0.0/0 163.172.19.80 tcp dpt:443 to:192.168.122.100:443 DNAT tcp -- 0.0.0.0/0 163.172.19.80 tcp dpt:4443 to:192.168.122.120:4443 DNAT udp -- 0.0.0.0/0 163.172.19.80 udp dpt:10000 to:192.168.122.120:10000